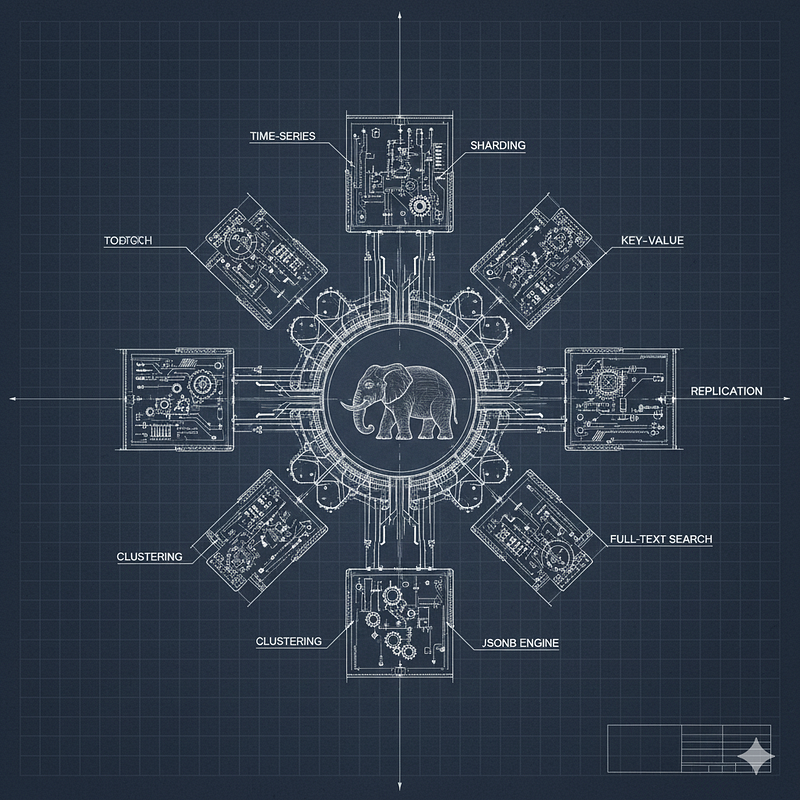

9 Reasons Why PostgreSQL is All You Need

9 Reasons Why PostgreSQL is All You Need

A practical guide for engineers who are tired of database sprawl. Stop managing five different systems and start mastering one.

Table of Contents

- Documents: Why JSONB makes MongoDB redundant for most use cases.

- Queues: Using

SKIP LOCKEDto replace RabbitMQ or SQS for background jobs. - Search: Integrated Full-Text Search that competes with Elasticsearch.

- Vectors: How

pgvectorbrings AI and LLM capabilities directly to your data. - Persistence: Using Postgres as a reliable Key-Value store instead of Redis.

- Scaling: Horizontal sharding with Citus for massive OLAP workloads.

- Time-Series: High-performance metrics and events handling with TimescaleDB.

- Geography: Industry-leading spatial analysis and GIS with PostGIS.

- Simplicity: One backup, one monitoring tool, and one security policy for everything.

1. Documents: Why JSONB makes MongoDB redundant

For years, developers reached for MongoDB whenever they needed schema flexibility. The pitch was simple: “Store data as it comes, no rigid tables required.” However, with the introduction and evolution of the JSONB (Binary JSON) data type, PostgreSQL has effectively eliminated the need for a separate document store in 90% of use cases.

The Hidden Cost of NoSQL Sprawl

When you introduce a dedicated NoSQL database like MongoDB alongside your relational store, you create a “Data Silo” problem:

- No Cross-Database Transactions: You lose ACID guarantees. If an order is saved in Postgres but the metadata fails in Mongo, your data is inconsistent.

- Operational Tax: You now have two sets of backups, two monitoring stacks, and two security policies to maintain.

- Application-Level Joins: Your code becomes messy because it has to fetch data from two places and manually stitch it together.

The PostgreSQL Edge: JSONB vs. JSON

PostgreSQL offers two types, but JSONB is the game-changer. Unlike the plain JSON type which stores text, JSONB stores data in a decomposed binary format.

- Faster Processing: It doesn’t need to be re-parsed every time it’s read.

- Indexing: It supports GIN (Generalized Inverted Index) indexing, allowing you to query deep inside a nested document as fast as a standard column.

Practical Implementation

Setting up a document store in PostgreSQL is as simple as creating a table. You get the flexibility of NoSQL with the power of SQL.

-- Create a table with a JSONB column

CREATE TABLE user_profiles (

id serial PRIMARY KEY,

user_id uuid NOT NULL, -- Relational integrity

metadata jsonb -- Document flexibility

);

-- Insert a complex, nested document

INSERT INTO user_profiles (user_id, metadata)

VALUES ('550e8400-e29b-41d4-a716-446655440000', '{

"name": "Oz",

"role": "SRE",

"tags": ["postgres", "security", "linux"],

"preferences": {"theme": "dark", "layout": "compact"}

}');

-- Create a GIN index to search inside the metadata column

CREATE INDEX idx_user_metadata_gin ON user_profiles USING GIN (metadata);High-Performance Querying

You can query nested keys or array elements using intuitive operators:

-- Query: Find users who have "security" in their tags list

SELECT metadata->>'name' AS name

FROM user_profiles

WHERE metadata @> '{"tags": ["security"]}';

-- Atomic Update: Change a nested value without overwriting the whole document

UPDATE user_profiles

SET metadata = jsonb_set(metadata, '{preferences, theme}', '"light"')

WHERE user_id = '550e8400-e29b-41d4-a716-446655440000';Key Takeaway

PostgreSQL allows for a Hybrid Schema. You can have a strict, validated column for email and created_at right next to a flexible metadata blob for everything else. You get the best of both worlds: the safety of a relational database and the speed of a document store.

2. Queues: Replacing RabbitMQ/SQS with SKIP LOCKED

In many architectures, developers immediately reach for RabbitMQ, Kafka, or AWS SQS as soon as they need to process background jobs (like sending an email or processing an image). While these are great tools, they introduce a significant overhead: the dual-write problem.

The Problem: Atomicity and “Zombies”

If you save a user to your database and then try to push a “Send Welcome Email” job to a separate broker, one of them might fail. If the DB commit succeeds but the broker push fails, your user never gets the email. To solve this, you usually need a complex “Outbox Pattern.”

The PostgreSQL Solution: SKIP LOCKED

Introduced in version 9.5, the FOR UPDATE SKIP LOCKED clause allows you to treat a standard table as a high-performance, ACID-compliant queue.

Practical Implementation

Instead of a separate broker, you create a jobs table within your existing database.

-- Create a robust jobs table

CREATE TABLE task_queue (

id serial PRIMARY KEY,

payload jsonb NOT NULL,

status text DEFAULT 'pending',

locked_at timestamp,

created_at timestamp DEFAULT now()

);

-- Index for performance

CREATE INDEX idx_task_queue_pending ON task_queue (id)

WHERE status = 'pending';The “Magic” Query

This is how multiple workers can safely pull jobs from the queue without stepping on each other’s toes:

-- Atomic fetch and lock:

-- Find the next pending job, lock it, and ignore jobs locked by other workers.

BEGIN;

WITH next_job AS (

SELECT id

FROM task_queue

WHERE status = 'pending'

ORDER BY id ASC

FOR UPDATE SKIP LOCKED

LIMIT 1

)

UPDATE task_queue

SET status = 'processing', locked_at = now()

FROM next_job

WHERE task_queue.id = next_job.id

RETURNING task_queue.id, task_queue.payload;

COMMIT;Why This Wins

- Transaction Safety: The job is created in the same transaction as your business logic. If the user isn’t saved, the job isn’t created. Period.

- Zero Infrastructure: No extra servers to patch, no extra ports to open, and no extra monthly costs.

- Powerful Features: Want to prioritize jobs? Just add a

prioritycolumn and change yourORDER BY. Want to delay a job? Add arun_atcolumn.

Key Takeaway

For most applications processing thousands (or even a few millions) of jobs per day, PostgreSQL is a more reliable and simpler queue than a dedicated message broker. You keep your stack lean and your data consistent.

3. Search: Beating Elasticsearch with Full-Text Search

Elasticsearch is powerful, but it is a resource hog. It requires significant RAM, a complex synchronization logic to keep it updated with your primary DB, and a steep learning curve for its DSL (Domain Specific Language). For most applications, PostgreSQL’s native Full-Text Search (FTS) and Trigrams are more than enough.

The Sync Nightmare

When you use a dedicated search engine, you have to deal with “Eventual Consistency.” There is always a delay between saving data in Postgres and seeing it in your search results. Furthermore, if your sync worker crashes, your search index becomes a “stale” lie.

The PostgreSQL Solution: tsvector and pg_trgm

PostgreSQL provides built-in support for linguistic search (stemming, stop words, ranking) and fuzzy matching (typo tolerance).

Practical Implementation: Professional Search

You don’t need a separate indexer. You can create a searchable index directly on your columns.

-- Enable the trigram extension for fuzzy matching (typo tolerance)

CREATE EXTENSION IF NOT EXISTS pg_trgm;

-- Create a table with content to search

CREATE TABLE articles (

id serial PRIMARY KEY,

title text,

body text,

search_vector tsvector -- Optimized for linguistic search

);

-- Indexing for speed

CREATE INDEX idx_articles_fts ON articles USING GIN (search_vector);

CREATE INDEX idx_articles_title_trgm ON articles USING GIN (title gin_trgm_ops);The Power of Linguistic Search

Postgres understands that “run,” “running,” and “ran” are the same word (stemming).

-- Search with ranking: Find articles about 'Postgres security'

SELECT title, ts_rank(search_vector, query) as rank

FROM articles, to_tsquery('english', 'Postgres & security') query

WHERE search_vector @@ query

ORDER BY rank DESC;

-- Fuzzy Search: Find 'PostgreSQL' even if the user types 'Postgre' (typo tolerance)

SELECT title

FROM articles

WHERE title % 'Postgre';Why This Wins

- Instant Updates: The moment you

COMMITa change to an article, it is searchable. No sync delays. - ACID Compliance: Your search index is never “out of sync” with your data because they live in the same transaction.

- Advanced Features: Postgres supports weighting (giving titles more importance than bodies), highlighting (snippets), and multiple languages.

Key Takeaway

Unless you are building the next Google or dealing with petabytes of logs, PostgreSQL’s search capabilities will handle your needs with better consistency and zero extra infrastructure cost.

4. Vectors: AI and LLM Integration with pgvector

To build AI features like Semantic Search (searching by meaning rather than keywords) or RAG (Retrieval-Augmented Generation), you need to store and query “embeddings” — mathematical representations of text or images.

The Overhead of Specialized Vector DBs

Adding a standalone vector database creates another “Data Silo.” You have to manage the metadata (like user IDs, timestamps, or categories) in Postgres and the vectors in another system. Joining these two datasets is a performance nightmare and a complex coding task.

The PostgreSQL Solution: pgvector

pgvector is an open-source extension that allows you to store, index, and query vectors directly alongside your relational data. It supports distance metrics like Euclidean, Cosine, and Inner Product.

Practical Implementation

You can transform a standard table into an AI-ready store with a single extension.

-- Enable the vector extension

CREATE EXTENSION IF NOT EXISTS vector;

-- Create a table for AI-powered document search

CREATE TABLE document_embeddings (

id serial PRIMARY KEY,

content text,

embedding vector(1536) -- 1536 is the dimension for OpenAI embeddings

);

-- Use HNSW (Hierarchical Navigable Small World) index for lightning-fast search

CREATE INDEX ON document_embeddings USING hnsw (embedding vector_cosine_ops);Querying the “Meaning”

Instead of looking for keywords, you look for “proximity” in vector space.

-- Find the top 5 documents most semantically similar to a user's query

SELECT content, 1 - (embedding <=> '[0.012, -0.023, ...]') AS similarity

FROM document_embeddings

ORDER BY similarity DESC

LIMIT 5;Why This Wins

- Relational Filtering: This is the killer feature. You can ask: “Find the most similar documents but only those created in the last 24 hours by Premium users.” A dedicated vector DB struggles with these complex relational joins.

- Consistency: When you delete a user’s record, their embeddings are deleted instantly in the same transaction.

- Mature Tooling: You can use all your existing Postgres backup (pgBackRest), monitoring, and security (RLS) tools on your AI data.

Key Takeaway

Vector search is just another data type. By using pgvector, you treat your AI embeddings as first-class citizens in your existing database, simplifying your AI architecture significantly.

5. Persistence: Replacing Redis with Postgres as a Key-Value Store

Redis is incredible for transient, “fire-and-forget” data. However, many teams use Redis to store critical data that must persist — like user sessions, feature flags, or configuration states. When Redis is used this way, you have to worry about persistence configurations (RDB/AOF) and the risk of data loss during a crash.

The Problem: The “Cache-Miss” Complexity

When you use a separate Key-Value store, your application logic becomes:

- Check Redis.

- If it’s a miss, query Postgres.

- Update Redis.

- Return the data. This “Cache-Aside” pattern introduces complexity and the risk of “Cache Stampedes” or stale data.

The PostgreSQL Solution: Unlogged Tables & Key-Value Logic

If you need high-speed Key-Value access with the safety of a real database, Postgres offers two powerful tools: JSONB (for structure) and UNLOGGED tables (for speed).

Practical Implementation

You can create a specialized table that behaves like a persistent cache but lives inside your DB.

-- Use an UNLOGGED table for maximum speed (skips the Write-Ahead Log)

-- Note: Data in unlogged tables is lost on a crash, use standard tables for 100% persistence.

CREATE UNLOGGED TABLE kv_store (

key text PRIMARY KEY,

value jsonb,

expires_at timestamp

);

-- Index for expiration cleanup

CREATE INDEX idx_kv_expiry ON kv_store (expires_at);Upserting Data (Atomic Set)

Just like a Redis SET, you can perform an atomic "Upsert" (Update or Insert) in one line.

INSERT INTO kv_store (key, value, expires_at)

VALUES ('user_123_session', '{"theme": "dark", "lang": "en"}', now() + interval '1 hour')

ON CONFLICT (key)

DO UPDATE SET value = EXCLUDED.value, expires_at = EXCLUDED.expires_at;Why This Wins

- ACID Persistence: If you use a regular (logged) table, your Key-Value data is 100% safe. If the power goes out, your sessions are still there.

- Complex Queries: Unlike Redis, you can query inside your values. “Find all keys where the value has a theme set to ‘dark’.”

- Reduced Latency: If your app is already talking to Postgres, adding a second connection to Redis actually increases the total network overhead and connection management complexity.

Key Takeaway

If your “cache” data needs to be queried, joined with other tables, or safely persisted, don’t build a complex sync logic with Redis. Use a dedicated table in Postgres and keep your architecture flat.

6. Scaling: Distributed Power with Citus

The common trap for growing companies is the “Database Migration Panic.” When a single server hits its CPU or storage limit, teams often abandon Postgres for complex distributed systems. Citus (now an open-source extension) solves this by transforming PostgreSQL into a distributed database that shards data across multiple nodes.

The Problem: The “Big Data” Migration

Moving to a specialized OLAP (Online Analytical Processing) or a distributed NoSQL system like Cassandra means:

- Rewriting Queries: You lose standard SQL support or join capabilities.

- ETL Complexity: You have to build pipelines to move data from your “source of truth” to your “scaling” database.

- Operational Friction: Managing a cluster of specialized nodes requires a dedicated team.

The PostgreSQL Solution: Citus and Sharding

Citus extends PostgreSQL with the ability to distribute tables and queries across a cluster of machines. It transparently routes your SQL queries to the correct nodes, giving you horizontal scale without changing your application code.

Practical Implementation

With Citus, you designate a “Distribution Column” (usually a Tenant ID or User ID) to shard your data.

-- Enable Citus extension

CREATE EXTENSION citus;

-- Create a large events table

CREATE TABLE user_events (

id bigserial,

user_id int,

event_type text,

created_at timestamp

);

-- Distribute the table across workers based on user_id

-- This ensures all data for a specific user stays on the same node

SELECT create_distributed_table('user_events', 'user_id');High-Performance Distributed Queries

When you query the coordinator, it parallelizes the work. A query that would take minutes on a single machine takes seconds on a Citus cluster.

-- This query runs in parallel across all worker nodes

SELECT user_id, count(*)

FROM user_events

WHERE created_at > now() - interval '7 days'

GROUP BY user_id;Why This Wins

- Multi-Tenant Excellence: If you are building a SaaS, Citus allows you to scale to millions of tenants while keeping each tenant’s data logically grouped together.

- Standard SQL: You don’t lose Joins, Constraints, or Transactions. Citus handles the distributed transaction logic for you.

- No ETL Required: Your transactional data (OLTP) and your analytical data (OLAP) live in the same cluster. You can run real-time analytics on live data.

Key Takeaway

You don’t need a “Warehouse” for your first 100TBs of data. By using Citus, you can scale PostgreSQL horizontally, keeping your architecture simple while gaining the performance of a distributed cluster.

Moving from horizontal scaling to the specialized world of high-velocity metrics. When teams start dealing with millions of sensor readings, logs, or stock prices, they often think they need a dedicated time-series database (TSDB) like InfluxDB or Prometheus.

7. Time-Series: High-Velocity Metrics with TimescaleDB

Handling time-series data in a standard relational database eventually leads to a performance wall. As your indexes grow massive, insert speeds plummet. This is where TimescaleDB — an open-source extension — transforms Postgres into a top-tier TSDB.

The Problem: The “Index Bloat” Wall

In a traditional database, every new row requires updating a massive B-tree index. Once that index exceeds the size of your RAM, disk I/O takes over, and your ingestion rate crashes. Dedicated TSDBs solve this but force you into a new ecosystem with limited SQL support and poor relational joining.

The PostgreSQL Solution: Hypertables and Chunking

TimescaleDB introduces the concept of Hypertables. It automatically partitions your data into “chunks” based on time. Since each chunk’s index fits into RAM, your ingestion speed stays constant, even as you hit billions of rows.

Practical Implementation

You can turn any standard table into a high-performance hypertable with a single command.

-- Enable TimescaleDB extension

CREATE EXTENSION IF NOT EXISTS timescaledb;

-- Create a standard table for IoT sensors

CREATE TABLE sensor_data (

time TIMESTAMPTZ NOT NULL,

sensor_id INTEGER NOT NULL,

temperature DOUBLE PRECISION NULL,

humidity DOUBLE PRECISION NULL

);

-- Convert to hypertable, partitioned by 1-day intervals

SELECT create_hypertable('sensor_data', 'time', chunk_time_interval => INTERVAL '1 day');Advanced Data Management

TimescaleDB allows you to automate data lifecycle tasks that are a nightmare in standard SQL.

-- 1. Compression: Shrink your data by 90% while keeping it queryable

ALTER TABLE sensor_data SET (

timescaledb.compress,

timescaledb.compress_segmentby = 'sensor_id'

);

-- 2. Continuous Aggregates: Real-time materialized views for dashboards

CREATE MATERIALIZED VIEW hourly_avg_temp

WITH (timescaledb.continuous) AS

SELECT time_bucket('1 hour', time) AS bucket,

sensor_id,

AVG(temperature)

FROM sensor_data

GROUP BY bucket, sensor_id;Why This Wins

- Relational Context: This is the ultimate advantage. You can join your raw metrics with your

usersordevicestable. "Show me the average temperature for devices owned by customers in the 'Premium' tier." Dedicated TSDBs can't do this easily. - Familiar SQL: No need to learn Flux or PromQL. Use the SQL your team already knows.

- Retention Policies: Automatically drop old data with simple policies, keeping your storage costs under control.

Key Takeaway

PostgreSQL is a time-series beast. By adding TimescaleDB, you get the ingestion speed and compression of a specialized TSDB without the pain of managing another database or losing your relational joins.

8. Geography: Industry-Leading Spatial Analysis with PostGIS

If your application needs to handle location data — calculating distances, finding points within a radius, or mapping complex boundaries — you might consider specialized tools. However, PostGIS turns PostgreSQL into the most powerful spatial engine in the world.

The Problem: The “Flat Earth” Limitation

Standard databases treat coordinates as simple numbers on a flat plane. But the earth is an ellipsoid. Calculating the distance between London and New York on a flat grid vs. a curved surface leads to massive errors. Most NoSQL “geo” features are limited to simple “points-in-a-circle” queries and lack support for complex polygons or spatial joins.

The PostgreSQL Solution: PostGIS

PostGIS adds support for geographic objects (Points, LineStrings, Polygons) and allows you to run spatial queries using real-world coordinates (latitude/longitude) with extreme precision.

Practical Implementation

You can transform your database into a mapping engine with one extension.

-- Enable the PostGIS extension

CREATE EXTENSION IF NOT EXISTS postgis;

-- Create a table for retail stores with a 'geography' column

CREATE TABLE retail_stores (

id serial PRIMARY KEY,

name text,

location geography(POINT, 4326) -- 4326 is the standard WGS 84 (GPS)

);

-- Add a spatial index for lightning-fast proximity searches

CREATE INDEX idx_stores_location ON retail_stores USING GIST (location);Advanced Spatial Querying

PostGIS allows you to ask complex questions that would be impossible in a standard database.

-- 1. Proximity Search: Find stores within 5km of a user

SELECT name, ST_Distance(location, 'SRID=4326;POINT(-122.33 47.60)'::geography) as distance

FROM retail_stores

WHERE ST_DWithin(location, 'SRID=4326;POINT(-122.33 47.60)'::geography, 5000)

ORDER BY distance;

-- 2. Spatial Join: Find how many delivery drivers are currently inside a specific neighborhood polygon

SELECT count(drivers.id), neighborhoods.name

FROM drivers

JOIN neighborhoods ON ST_Contains(neighborhoods.geom, drivers.last_location)

GROUP BY neighborhoods.name;Why This Wins

- Precision: PostGIS handles the complex math of the earth’s curvature (Geodetic math) automatically.

- Massive Feature Set: With over 1,000 spatial functions, you can perform routing, 3D analysis, and raster processing.

- Topological Integrity: You can ensure that your boundaries (like city borders) don’t overlap or have gaps, a feature dedicated NoSQL stores simply don’t have.

Key Takeaway

If you have location data, you don’t need a specialized GIS server. PostGIS provides a professional-grade spatial toolkit that integrates perfectly with your existing relational data, making it the only choice for modern mapping and logistics apps.

9. Simplicity: The One-Database Strategy

The “Single Source of Truth” (SSoT) is often discussed in business logic, but it’s even more critical in infrastructure. When you consolidate your Document, Queue, Search, Vector, and Spatial data into PostgreSQL, you eliminate the “Data Silo” effect.

The Operational Tax of Polyglot Persistence

Maintaining a “Best-of-Breed” stack (Mongo + Redis + Elasticsearch + RabbitMQ) sounds great in theory, but in practice, it leads to:

- Fragmented Backups: You have five different backup schedules and five different ways to fail a restore.

- Security Gaps: You must manage five different sets of firewall rules, encryption keys, and user access policies.

- Synchronization Issues: Keeping data consistent across five different engines is a nightmare that often leads to “ghost data” or stale results.

The PostgreSQL Solution: Unified Management

By staying within the PostgreSQL ecosystem, you simplify your entire operational lifecycle:

-- One Security Policy (Row Level Security) for ALL data types

ALTER TABLE app_data ENABLE ROW LEVEL SECURITY;

CREATE POLICY tenant_isolation ON app_data

USING (tenant_id = current_setting('app.current_tenant'));Why This Wins

- One Skillset to Rule Them All: Your team only needs to master PostgreSQL. They don’t need to learn a dozen different DSLs or specialized query languages.

- Unified Monitoring: Use one tool (like

pg_stat_statementsorprometheus-postgres-exporter) to monitor your entire data platform. - Atomic Refactoring: Moving a feature from a JSONB döküman to a relational column is a single

ALTER TABLEaway, rather than a massive cross-database migration project. - Predictable Costs: It is significantly cheaper to scale one large PostgreSQL instance (or Citus cluster) than to pay for five separate managed cloud databases.

Key Takeaway

PostgreSQL is no longer just a “Relational Database” — it is a Unified Data Platform. By pushing Postgres to its limits, you reduce your architectural surface area, decrease your “Bus Factor,” and allow your team to focus on building features rather than managing infrastructure.

Final Words

PostgreSQL has evolved into the “Swiss Army Knife” of the modern backend. From AI vectors to real-time queues, it proves that you don’t need to trade simplicity for power.

Looking to harden the security of your new “Everything-in-Postgres” instance? Check out my latest practical guide: 20 PostgreSQL Security Tips

← PostgreSQL Blog

← PostgreSQL Blog